GCP Data Engineer Question 13

GCP: Fast-Tracking PySpark Migrations! #shorts

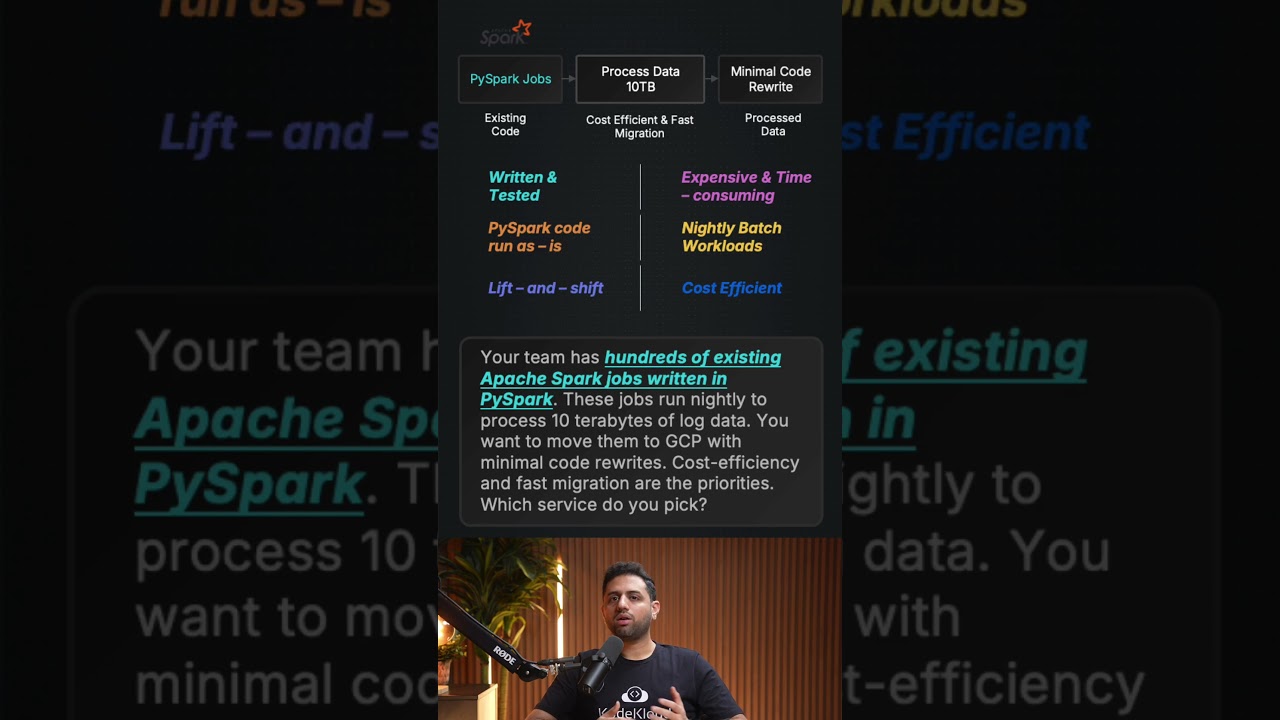

When migrating hundreds of existing PySpark batch jobs processing 10TB of nightly data to Google Cloud, Cloud Dataproc is the definitive solution because it offers a fully managed Apache Spark and Hadoop environment, enabling a seamless lift-and-shift migration with zero code rewrites. Attempting to use Dataflow would force your team into a massive, time-consuming rewrite using the Apache Beam framework, while BigQuery Scheduled Queries are built strictly for SQL and cannot execute Python-based Spark scripts. Additionally, Cloud Composer is merely an orchestration tool and lacks the data processing engine required for this workload. By choosing Dataproc, you perfectly balance cost efficiency and deployment speed while keeping your core analytics logic completely intact.

#GCP #Dataproc #DataEngineering #ApacheSpark #PySpark #CloudMigration #BigData #GoogleCloud #Dataflow #CloudComputing #GCPCertification #TechTips #DataArchitecture

When migrating hundreds of existing PySpark batch jobs processing 10TB of nightly data to Google Cloud, Cloud Dataproc is the definitive solution because it offers a fully managed Apache Spark and Hadoop environment, enabling a seamless lift-and-shift migration with zero code rewrites. Attempting to use Dataflow would force your team into a massive, time-consuming rewrite using the Apache Beam framework, while BigQuery Scheduled Queries are built strictly for SQL and cannot execute Python-based Spark scripts. Additionally, Cloud Composer is merely an orchestration tool and lacks the data processing engine required for this workload. By choosing Dataproc, you perfectly balance cost efficiency and deployment speed while keeping your core analytics logic completely intact.

#GCP #Dataproc #DataEngineering #ApacheSpark #PySpark #CloudMigration #BigData #GoogleCloud #Dataflow #CloudComputing #GCPCertification #TechTips #DataArchitecture

KodeKloud

...